What is Concurrency?

Have you ever wondered how your computer can run apps, edit documents, stream music on YouTube, and update software all at the same time? Doing this multitasking is not just a stroke of luck; it is related to a foundational concept in computer science known as Concurrency.

| Key Takeaways: |

|---|

|

Let’s explore what concurrency is, how it works, how it differs from related concepts like parallelism and multitasking, and why it is essential in modern software systems.

What is Concurrency?

Concurrency is the ability of a system to manage multiple tasks simultaneously.

The word “concurrency” doesn’t always mean more than one task is executed simultaneously, like in parallelism. It means multiple tasks are in progress simultaneously. To be clearer, concurrency handles several things so they appear to run simultaneously.

The primary keyword in the definition of concurrency is “manage”. When you say the system is concurrent, it means your system can manage multiple tasks by switching between them or coordinating their execution.

In computing terms, tasks (also called processes or threads) are interleaved so that each makes progress over a period of time.

Key Aspects of Concurrency

- Task/Thread Management: Multiple threads (the smallest sequence of programmed instructions) or tasks (processes) can run concurrently without finishing one before starting another.

- Interleaving: Concurrent tasks can be executed in an interleaved manner, with each part of a task executed in a specific sequence. This ensures optimum usage of system resources and greater responsiveness.

- Parallelism: Concurrency enables multiple tasks or processes to execute in parallel, leveraging available resources such as threads. When one app uses the processor and the other, say, a disk drive, it takes less time to run both apps simultaneously than to run them sequentially. This improves performance and reduces execution time.

- Shared Resource Handling: Concurrency should efficiently manage shared resources such as files, data structures, and network connections to avoid conflicts and ensure the reliable execution of tasks. While doing this, it introduces challenges such as deadlocks, synchronization complexities, and race conditions.

Real-Life Analogy

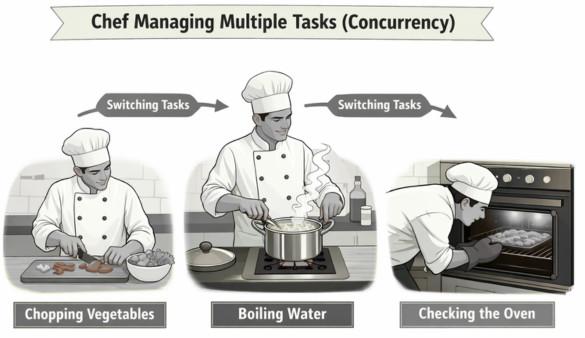

As a real-life analogy of concurrency, imagine a chef in a kitchen (single-core system) working simultaneously on chopping vegetables, boiling water, and checking the oven. This system is shown in the following figure:

The chef continuously switches between these tasks to prepare a meal efficiently. This is a classic example of concurrency: managing multiple tasks at once rather than one after the other so that all tasks progress efficiently.

Why does Concurrency Matter?

Concurrency, by enabling tasks to run simultaneously, delivers faster performance, improved resource utilization, and highly responsive user interfaces. Applications can progress with various independent tasks without waiting for other processes to finish. This is especially critical in modern multi-core computing and distributed systems.

- Improved Responsiveness: In interactive applications such as web browsers or mobile apps, we expect the user interface to remain responsive even when background tasks are running. A concurrent application can handle user input while simultaneously loading data, performing computations, or making network requests. Without concurrency, an application would freeze while performing a long-running background task.

- Optimal Resource Utilization: Modern systems frequently wait for external events or triggers, such as disk I/O or network responses. If the system is not concurrent, the CPU would be idle during this waiting period. By switching to other tasks during wait times, systems make optimum use of available resources.

- Increased Performance and Throughput: Concurrency maximizes CPU utilization, particularly in I/O-bound tasks, where the system can run other tasks while waiting for network or disk input.

- Complex Task Handling: Applications running on concurrent systems can run background tasks (such as file saving or network calls) without interrupting the user’s workflow.

- Scalability: Servers rely on concurrency to handle thousands or millions of user requests. Concurrent systems manage many requests in overlapping time frames, ensuring high throughput.

- Modularity: With concurrency, developers can structure their systems as independent components or tasks that interact with each other. This structuring leads to better design and maintainability.

Concurrency vs. Parallelism

Concurrency and parallelism are often used interchangeably, but they have several key differences, as summarized in the following table:

| Concurrency | Parallelism |

|---|---|

| Concurrency deals with lots of things at once. | Parallelism does lots of things at once. |

| In concurrency, multiple tasks are in progress during overlapping time periods. | In parallel, multiple tasks are executed simultaneously. |

| Concurrent tasks may be interleaved on a single CPU. | Parallelism requires multiple processors or cores. |

| Concurrency focuses on managing multiple tasks. | Parallelism involves performing multiple tasks simultaneously. |

| Concurrency as a design principle. | Parallelism as a hardware capability. |

| The amount of work completed per unit of time increases with concurrency. | Parallelism improves the system’s throughput and computational speed. |

| Concurrency is a non-deterministic approach to control flow. | Parallelism is a deterministic approach to control flow. |

| In concurrency, debugging can be challenging. | Debugging is simpler than in concurrent systems. |

Concurrency in Operating Systems

We have already understood the concept of concurrency in general. Now, let us discuss concurrency in the operating system.

- Multiple user applications

- Background services

- Hardware interrupts

- System processes

The operating system scheduler determines how CPU time is distributed among processes and threads. In a single-core system, the scheduler gives the illusion of simultaneous execution by rapidly switching between tasks. On multi-core systems, some tasks actually run in parallel.

This ability to coordinate multiple tasks/activities forms the foundation of multitasking environments.

Processes and Threads

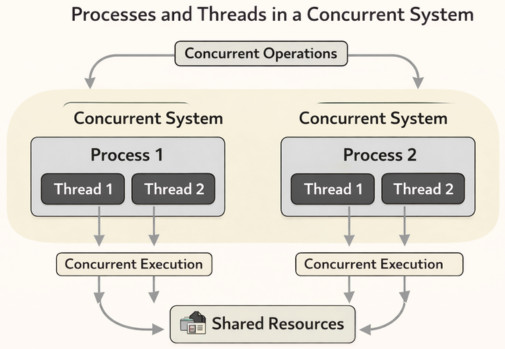

Concurrency is typically implemented using processes or threads.

A diagram depicting concurrent execution using processes and threads is shown below:

Processes

A process is an independent unit of a program in execution. Each process has its own system resources and memory space. Processes are isolated from one another, which increases safety but makes communication more complex.

Threads

Threads are the smallest units of execution within a process. A process can have multiple threads, and they share the same memory space. This makes communication easier, but it introduces challenges such as synchronization and data consistency. Threads are lightweight and efficient and are commonly used to achieve concurrency within applications.

Concurrency Models

Concurrency models define how different parts of a concurrent program or system execute, communicate, and manage shared state to perform tasks simultaneously and efficiently. These models offer different approaches to concurrency.

Here are a few important concurrency models:

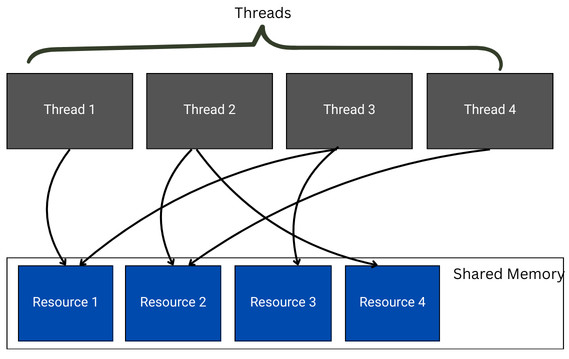

Shared Memory Model

This is a traditional concurrency model in which multiple threads access a shared memory space to read and write data. This arrangement is shown in the diagram below:

Shared memory mode requires manual synchronization. Synchronization mechanisms such as locks, semaphores, and mutexes are used to prevent data corruption and conflicts.

- There is efficient communication between threads and resources.

- This is a familiar programming style and is easier for developers to implement.

- There is a risk of race conditions.

- Debugging is complex.

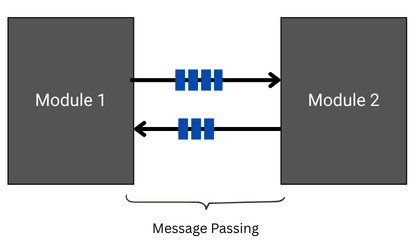

Message Passing Model

In the message-passing model, tasks/modules communicate by exchanging messages rather than sharing memory. This model reduces the risk of data corruption because each task manages its own state. The message passing model is shown below:

- Actors: These are the independent entities with a private state that communicate via asynchronous messaging.

- Channels (CSP): These are communication channels used by threads in languages like Go.

- Since each module communicates its own state, there is improved safety.

- There is easier reasoning about data.

- They might be a potential overhead from message passing.

Event-Driven Model

In event-driven systems, there is a single-threaded event loop that processes events. The model is ideal for I/O-bound applications and reduces context-switching overhead.

Tasks respond to events such as user input or network activity. The model is commonly used in web servers and GUI frameworks.

- It scales well for I/O-bound tasks.

- It has efficient resource usage.

- The model’s control flow is complex.

- There may be callback management challenges.

Functional/Immutable Programming

This model uses immutable data structures to eliminate the side effects and race conditions entirely, thus making concurrency safer.

Data Parallelism

This model distributes data across multiple processors or threads, where each unit performs the same operation on a portion of the data (e.g., MapReduce, CUDA).

Assembly Line (Pipeline)

In the assembly line model, tasks are broken into stages, with each thread handling one stage, similar to a factory assembly line, providing good hardware utilization without shared state.

What are the Common Concurrency Challenges?

Concurrency challenges typically arise when multiple tasks or processes are executed simultaneously, especially in environments with shared resources. In such situations, it introduces complexity. Here are some common concurrency challenges:

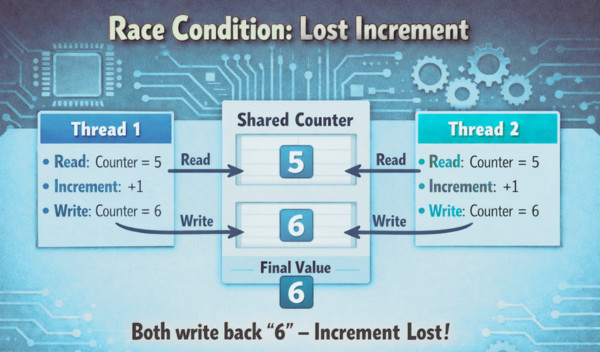

Race Conditions

A race condition occurs when multiple threads or processes access and modify shared data. In this case, the outcome may be unpredictable.

For example, consider the following arrangement demonstrating a race condition:

If there is a shared counter, two threads incrementing it simultaneously may cause a race condition. Without synchronization, both might read the same initial value, increment it, and write back the same result, effectively losing one increment.

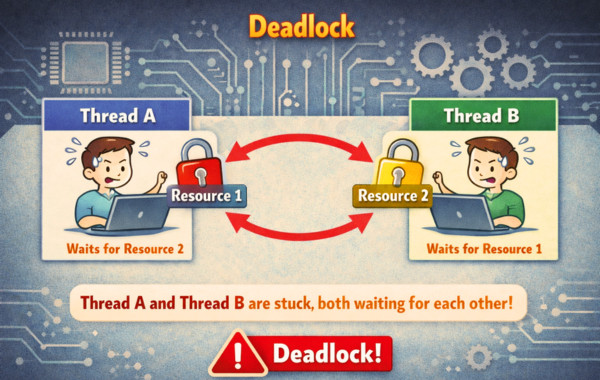

Deadlocks

When two or more threads or processes wait indefinitely for resources held by each other, a cycle of dependencies called deadlock is created. In this situation, tasks cannot proceed further, and the entire system stalls.

Consider two threads, Thread A and Thread B. Thread A holds Resource 1 and waits for Resource 2. Thread B holds Resource 2 and waits for Resource 1. This is a deadlock situation.

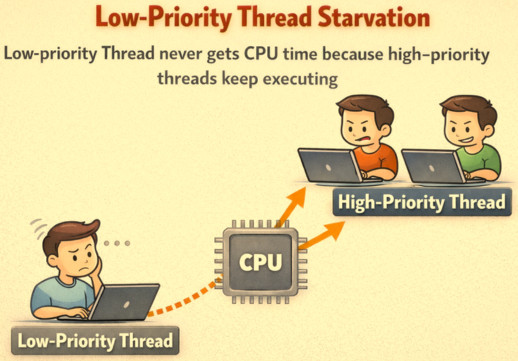

Starvation

When the system perpetually denies resources to a thread or process, starvation occurs. One reason to deny resources to a thread or process is that other threads/processes are monopolizing those resources.

For example, a low-priority thread never gets CPU time because high-priority threads keep executing.

Livelock

In a livelock, threads or processes continuously change their states in response to one another, making no progress. They are not blocked particularly.

For example, a livelock occurs when two threads repeatedly yield to each other, each thinking the other needs the resource first.

Synchronization Overhead

A synchronization overhead is the additional time needed to manage access to shared resources. This can degrade performance.

For example, excessive use of locks can slow down a program, as there is a considerable overhead of acquiring and releasing locks.

Data Inconsistency (Database)

- Lost Updates: Two database transactions update the same data. One overwrites the other, causing the first update to be lost.

- Dirty Reads: A transaction reads data that has been updated by another transaction but not yet committed.

- Unrepeatable Reads: A transaction reads the same data twice, but between the two reads, another transaction has modified it. Hence, the second read sees a different value.

- Phantom Reads: This happens for a query. A transaction executes a query twice and receives different row sets. This is because another transaction inserted/deleted rows in between the two query executions.

Concurrency Mitigation Strategies

- Locks and Mutexes: Transactions acquire locks (shared/read or exclusive/write) to ensure only one thread/process can access a critical section at a time.

- Semaphores: These are the constructs that control access to a common resource by multiple threads/processes.

- Atomic Operations: Operations (read-modify-write) are performed as a single, indivisible step.

- Thread Pools: A thread pool is a collection of worker threads managed by the system to optimize resource usage and avoid excessive thread creation.

- Lock-Free Data Structures: Data structures that do not require locks are used to minimize the risk of deadlocks and reduce overhead.

- Proper Design: Systems are designed to minimize shared state and dependencies between threads.

Concurrency in Modern Applications

Concurrency means multiple computations are in progress simultaneously. In modern applications, multiple tasks are managed simultaneously, enhancing performance and responsiveness in multi-core systems, particularly in cloud computing environments. It occurs whenever there are multiple computers in a network, multiple applications running on a single computer, or multiple processors in a single computer.

- Websites handling multiple simultaneous users.

- Mobile apps perform some of their processing on servers (“in the cloud”).

- Graphical user interfaces (GUIs) and IDEs almost always run background tasks that do not interrupt the user.

- Web Servers: Concurrency is used to handle thousands of simultaneous connection requests. Each request is an independent task, often managed using threads or asynchronous I/O.

- Databases: To allow multiple users to read and write data simultaneously without corruption, databases use concurrency control.

- Mobile Apps: Background threads handle network operations while keeping the UI responsive.

- Cloud Systems: Cloud infrastructure relies heavily on concurrency to handle high traffic volumes and distributed workloads.

Modern applications use concurrency and various techniques based on concurrency. They effectively mitigate the challenges arising from concurrency in modern apps.

Asynchronous Programming

Asynchronous (Async) programming aligns closely with concurrency. In async programming, tasks begin and then yield control to other tasks while waiting for external events.

For example, when a program makes a network request, it does not block until it receives a response. Instead, it continues executing other tasks and handles the response later when it arrives. This way, resources are freed up for other tasks.

The async programming model is particularly effective for I/O-bound systems.

Multitasking

Multitasking is the ability of an operating system to run multiple programs simultaneously. This is based on concurrency as the underlying concept. While multitasking is visible to the user, it is the concurrency in its design and coordination principle that makes it possible.

Distributed Systems

- Multiple machines execute tasks simultaneously.

- Services interact over different networks.

- It is important to maintain data consistency across nodes.

Given communication delays and partial failures, concurrency control in distributed systems is more complex. In distributed environments, concepts such as distributed locks, consensus algorithms, and eventual consistency are used to manage concurrency.

Concurrency Benefits

- Improved Performance: With concurrency, different parts of a program run independently, maximizing CPU usage and increasing overall task completion speed, especially on multi-core processors.

- Better Responsiveness: Concurrency enables time-consuming operations (e.g., file downloads or database queries) to run in the background, freeing the main application thread to handle user input and preventing the app from freezing.

- Higher Scalability: It is easier to scale applications to handle increased workloads by effectively utilizing additional processor cores.

- Efficient Resource Utilization: Concurrency ensures efficient usage of system resources, including CPU, memory, and I/O devices, reducing idle time by appropriately circulating the resources among threads/processes.

- Improved User Experience: Concurrency applications feel faster and more interactive as they can manage multiple operations simultaneously.

- Reduced Waiting Time: In multithreaded systems, waiting time among processes is reduced as they do not need to wait for a single, long operation to finish. This allows other tasks to proceed immediately.

- Fault Tolerance: Concurrent execution using threads provides better isolation. If one thread fails, it does not necessarily cause the entire application to crash.

The Future of Concurrency

Concurrency becomes even more essential as hardware evolves. Today, multi-core processors are standard, and distributed cloud environments are the norm. This will only get more prominent in the future with modern computing power.

- Structured Concurrency for Better Task Management: Many modern programming languages (like Swift with Actors) are adopting structured concurrency. This reduces bugs such as leaks and orphaned threads due to spawned tasks.

- Asynchronous Paradigms: In modern development environments such as NodeJS, there is a shift from thread-based to event-loop-driven and async/await models. The concurrent code looks and acts synchronously, which simplifies backend development.

- Memory Safety and Zero-Cost Abstractions: Future models prioritize safety, with compilers and runtimes preventing data races at compile time rather than relying on manual locks that are error-prone.

- Improved Parallelism Tools: Certain new libraries in C++ with atomic pointers and better task scheduling improve throughput on modern CPU architectures

Conclusion

Concurrency is a foundational concept in computer science that enables systems to manage multiple tasks efficiently. Concurrency is often confused with parallelism. However, parallelism is about executing multiple tasks simultaneously. Concurrency, on the other hand, is about coordinating activities that overlap in time, not necessarily executing them simultaneously. Concurrency focuses more on structure and task management.

Concurrency is in all aspects of software, from operating systems and web servers to mobile apps and distributed cloud systems. It improves responsiveness, resource utilization, user experience, and scalability. However, it introduces challenges such as race conditions and deadlocks.

As modern systems grow more complex and hardware continues to evolve, understanding concurrency will remain one of the most important skills for developers and engineers alike.

Additional Resources

- What is Composable Architecture in Software Development?

- What are Antipatterns in Software Development?

- What are the Qualities of a Good Software?

- What is the Concurrent Development Model in Software Engineering?

- Cohesion vs Coupling

- What is Code Optimization?

Frequently Asked Questions (FAQs)

- What is concurrency in simple terms?

Concurrency is the ability of a system to manage multiple tasks at overlapping periods of time. It does not necessarily mean tasks run at the exact same moment, but rather that the system efficiently switches between them so each makes progress.

- Why is concurrency important in modern applications?

Concurrency improves responsiveness, scalability, and resource utilization. It allows applications to handle user input, network requests, and background processing without freezing or slowing down, which is critical for web apps, mobile apps, and cloud systems.

- Does concurrency require multiple CPU cores?

No. Concurrency can exist on a single-core processor through rapid context switching between tasks. However, multiple cores enable parallelism, allowing tasks to run simultaneously.

- What role does concurrency play in database systems?

Concurrency in database systems is pivotal for efficient and simultaneous transaction processing. It enables multiple users to access and modify the database concurrently, enhancing overall system performance. Through techniques like locking and isolation levels, concurrency control ensures data integrity, preventing conflicts and ensuring accurate results.

- How can concurrency impact the responsiveness of a user interface (UI)?

Concurrency contributes significantly to UI responsiveness. By handling tasks concurrently, such as UI rendering and data fetching, the user interface remains smooth and interactive.

|

|