Ralph Loop Explained: A Smarter Agentic Loop for Building and Testing Software

If you don’t know about the “Ralph Loop”, it’s likely because you are still trying to use AI the “old” way – like a really smart chatbot that requires babysitting.

Popularized by Geoffrey Huntley and named after Ralph Wiggum – the endearingly knack-less but undeniably tenacious Simpsons character – a simple, yet powerful idea flows from this: instead of just hitting enter once for AI to generate a response, you put the agent in an iterative loop that allows it to keep iterating until the thing is really done.

In practice, that means the AI does not just write code or content – it writes and tests and fixes and retries everything itself. Over and over, until it meets a certain definition of “done”.

It sounds almost too simple. But that’s exactly the point.

| Key Takeaways: |

|---|

|

What is the Ralph Loop?

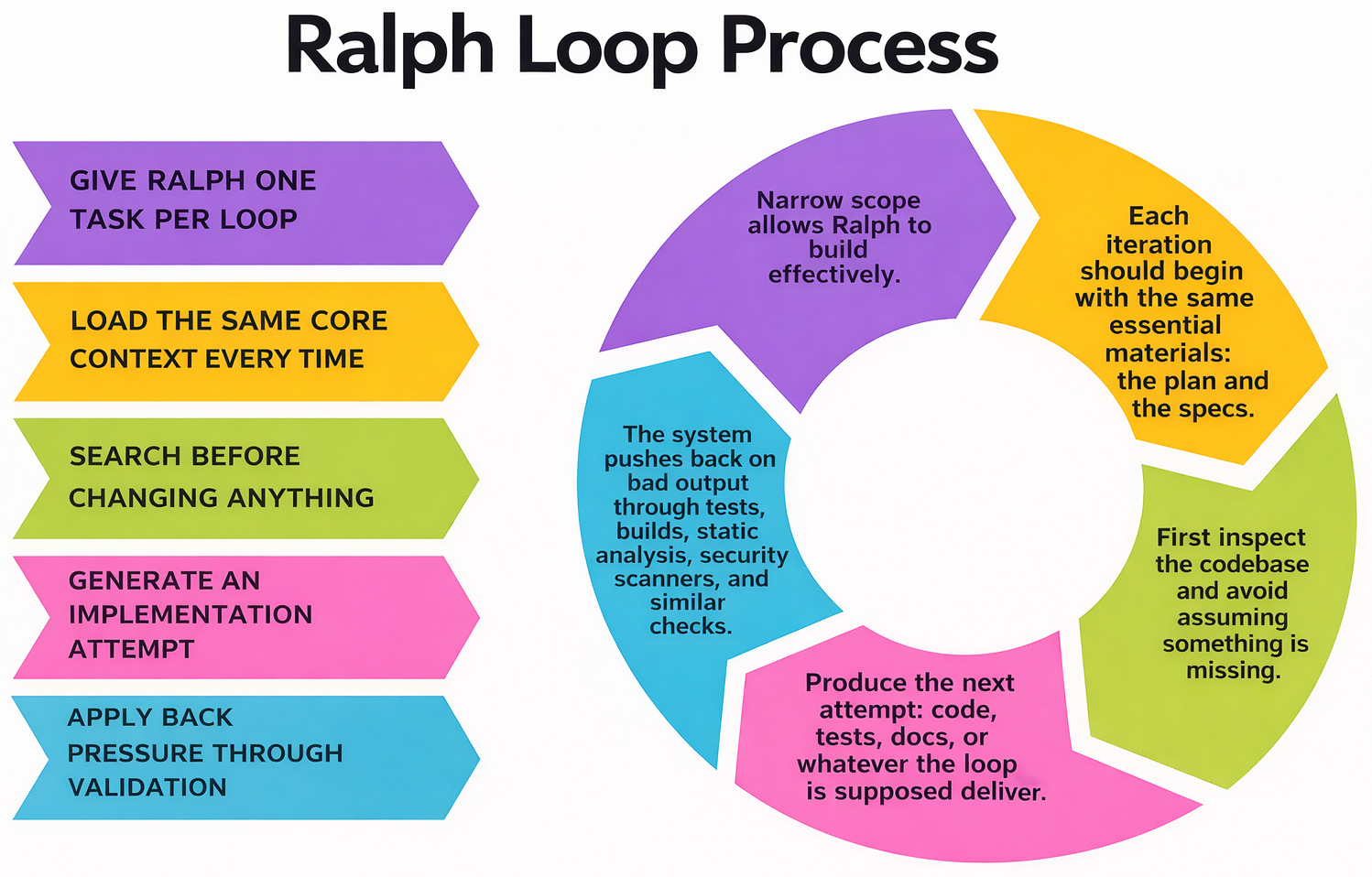

At its heart, the Ralph Loop is a simple pattern for getting AI agents to complete tasks, rather than just doing them.

Instead of getting prompted once and checking the output manually, you give the agent a goal, evaluations to perform on its own output, and finally a loop to keep trying until it nails it.

- Generate an output

- Verify that it does what you want (tests/validation/rules)

- Fix what’s broken

- Try again

And repeat until it passes.

while :; do cat PROMPT.md | agent ; done

What makes this different from typical AI usage is persistence.

Why Traditional AI Workflows Fall Short

Most teams are still using AI in a way that looks productive, but breaks down pretty quickly in real work.

The typical workflow goes like this: you write a prompt, get a response, scan it, fix what’s wrong, maybe prompt again, and repeat until it’s usable. It works for small tasks, but the moment things get even slightly complex, the cracks start to show.

The biggest issue is that the responsibility still sits with the human.

You’re the one validating the outputs. You’re the one catching errors. You’re the one deciding whether something is “good enough”. AI might speed things up, but it doesn’t really take work off your plate; it just shifts it around.

Then there’s the inconsistency problem.

Even with a well-written prompt, outputs can vary. One response is solid, the next one misses edge cases, and suddenly you’re double-checking everything anyway. That lack of reliability makes it hard to trust AI for anything beyond low-risk tasks.

And finally, there’s no built-in feedback loop.

Traditional AI usage is mostly stateless. Each prompt is a fresh attempt, with no structured way to learn from mistakes or improve results over time. If something fails, you start over. There’s no system pushing the output toward correctness, just more prompting.

That’s why, despite all the hype, a lot of teams still treat AI as an assistant rather than a dependable part of their workflow.

What Problems Does the Ralph Loop Solve?

- The Ralph Loop avoids the context gutter or context rot problem, where the model starts losing track of what’s important once the conversation gets too long. Instead of relying on long conversations, each iteration starts fresh. The “memory” lives in files – code, tests, specs – not in chat history. That keeps the agent focused and consistent.

- The one-shot problem is another one that Ralph avoids, as it keeps iterating until the output meets a defined standard.

- This loop relies on objective feedback through solid tests, linters, and code analysis, which is a problem for AI systems, as validation tends to be subjective there.

- With this loop, you can tackle the output inconsistencies that tend to follow AI systems. The agent doesn’t stop at the first output – it keeps refining until it passes checks. That reduces randomness over time.

- The Ralph Loop follows proper criteria to decide when it’s “done”, unlike AI systems that don’t have a hard stop.

- The focus shifts from crafting prompts (prompt engineering), which can be dodgy, to building better systems and a good environment for the agent to function in.

Ralph Loops and Agentic Loops

An agentic loop is a broader category that includes Ralph loops too. Agentic loops describe systems in which AI agents plan steps, use memory, employ multiple tools, and even work together. It’s a flexible concept, and there are many ways to implement it.

The Ralph Loop, on the other hand, is much more specific. The focus is on a single task. No long memory chains. No heavy planning layers. No complex orchestration.

- Too much context to manage

- Too many moving parts

- Unclear signals for success

Broader agentic loops are great for when you’re planning a product, exploring ideas, or coordinating multiple systems. But when your goal is concrete and clearly testable, opting for a Ralph loop is a better idea.

What You Need to Implement the Ralph Loop Effectively

The Ralph Loop sounds simple on paper, but in practice, it only works well when the setup around the agent is solid.

An agent can’t “figure it out” in a vacuum. It needs clear instructions, clear boundaries, and a reliable way to know whether it’s getting closer to the right answer. That’s where the core pillars come in.

A PRD or Task Brief That Defines the Outcome

The first pillar is a clear product requirement document, task brief, or spec.

This is what tells the agent what it’s actually trying to achieve. Without it, the loop turns into random trial and error. The agent may keep producing output, but it won’t necessarily produce the right output.

- What needs to be built or fixed?

- What does success look like?

- What should not be changed?

- What constraints matter?

- Are there edge cases or acceptance criteria?

2. An agent.md File That Explains How to Operate

If the PRD defines what needs to happen, the agent.md file defines how the agent should behave while doing it.

- Coding conventions

- Project structure

- Commands to run

- Files or directories to avoid touching

- How to write tests

- How to format responses or commits

- What to do when something fails

Without this layer, agents tend to make bad assumptions. They may write code in the wrong place, use the wrong commands, ignore local conventions, or keep looping on avoidable mistakes.

A strong agent.md reduces drift. It keeps the agent aligned with how the team actually works, not just what the model thinks is reasonable.

3. Tests That Define “Done”

This is the real backbone of the Ralph Loop.

The loop only works if the agent has a way to check whether its output is correct. In most engineering workflows, that means tests.

Instead of relying on a human to inspect every attempt, the agent can run the suite, see what failed, and use that feedback to improve the next iteration.

- Integration tests

- End-to-end tests

- Regression checks

- Snapshot tests

- API contract tests

The more directly the tests map to the intended outcome, the more useful the loop becomes.

4. Linters and Static Checks That Catch the Obvious Early

Tests tell you whether the behavior is correct. Linters and static analysis tools catch everything else that can still go wrong along the way.

- Formatting issues

- Type errors

- Unused imports

- Broken conventions

- Unsafe patterns

- Low-quality code that technically passes tests

This matters because an agent that only optimizes for passing tests can still leave behind messy code. Linters, type checkers, and static analysis tools raise the quality bar. They force the agent to meet the team’s standards, not just squeak by with a green test run.

How Does the Loop Work?

Other Implementation-Level Factors That Matter

The PRD, agent.md, tests, and linters are the foundation. But a few other details make a big difference in whether the loop is actually useful in production.

Tight Feedback Cycles

The faster the validation step runs, the better the loop performs. If every attempt takes ten minutes to test, the agent slows to a crawl. Fast unit tests, scoped checks, and incremental validation make the whole loop more effective.

Clear Failure Output

Agents do better when failure messages are specific. A vague “build failed” doesn’t help much. A stack trace, assertion error, or lint message gives the agent something concrete to fix.

In other words, the loop gets stronger when the feedback is not just fast, but readable.

Guardrails Around File Access and Scope

Agents should know what they’re allowed to change. If they can rewrite half the codebase to fix one failing test, they probably will. Good implementations put limits around file scope, command access, dependency changes, and risky operations.

Small, Well-Scoped Tasks

The Ralph Loop tends to work best when the task is narrow enough to be verified clearly. “Fix this failing test” is a better fit than “improve the architecture of the platform”. The broader the task, the fuzzier “done” becomes.

That doesn’t mean agents can’t work on large projects. It means large projects usually need to be broken into smaller loops.

Retry Limits and Stop Conditions

- After a set number of retries

- When errors stop changing

- When the loop starts oscillating

- When human review is needed

Observability

If you’re running agents in a loop, you need visibility into what they’re doing. What did they change? Which checks failed? How many attempts did it take? Where did they get stuck?

Without that, debugging the loop becomes its own problem.

Best Practices for Using the Ralph Loop

- Start with a loop you can understand, not automate blindly.

- Keep each loop focused on one task. Ralph works best when it does one thing at a time.

- Reset context every time – don’t let conversations grow. For this, you can create subagents so that your primary context window acts more like a scheduler for other subagents.

- Use strong tests because Ralph is only as good as the feedback it gets.

- Keep loops fast and tight, meaning lightweight tests and scoped tasks.

- Get a single loop working properly first, then scale to multi-agent implementations.

Ralph Loop vs Other Development Approaches

Most methodologies focus on how teams work together – planning, shipping, and improving software over time. The Ralph Loop is more about how work gets executed at the task level, especially when AI is involved.

Ralph Loop vs Waterfall

Waterfall is linear. You define everything upfront, build it, test it at the end, and hope things hold together. Feedback comes late. Fixes are expensive. And once you move forward, going back is painful.

The Ralph Loop flips that completely.

Instead of waiting until the end to validate, it builds feedback into every iteration. The agent keeps testing and fixing as it goes, so issues get caught early, before they compound.

Ralph Loop vs Agile

Agile already embraces iteration – small changes, frequent releases, continuous feedback. But most Agile workflows still rely on humans to drive that loop.

A developer writes code → QA tests it → feedback comes back → fixes are made → repeat.

The Ralph Loop compresses that cycle.

The agent takes on part of that inner loop – writing, testing, and fixing within a single flow. Instead of passing work between people for every iteration, a lot of that back and forth happens automatically.

Ralph Loop vs DevOps

DevOps focuses on automation, CI/CD, and reducing friction between development and operations. In many ways, the Ralph Loop builds on top of that.

CI pipelines already run tests and checks automatically. The difference is what happens next.

- Code fails → pipeline breaks → human steps in to fix it

- Code fails → agent sees the failure → fixes it → retries automatically

Same signals, different responses.

Ralph Loop vs Prompt-Based AI Workflows

- Ask → get output → review → fix → re-prompt

It’s manual, inconsistent, and hard to scale.

- Assign task → generate → validate → fix → repeat

No constant prompting. No babysitting every step. The key shift is from interaction to execution.

Where Does Ralph Loop Fit in All This?

- Agile helps you decide what to build

- DevOps helps you ship it reliably

- The Ralph Loop helps you get each task to a working state faster

It operates inside your existing workflow, tightening the feedback loop at the lowest level – where most of the time and friction actually live.

Is This a Step Closer to Agentic Workflows?

Much of today’s AI still has the character of an assistant – you ask, it responds.

The Ralph Loop brings things closer to real agents. You give it a task, define success, and the system works to realize it on its own. It’s a minor adjustment, but it alters your mindset about utilizing AI; from something you engage with, to something that performs.

And once that loop is established, the question moves from “What can AI help me with?” to “What can I safely delegate to it?”

Additional Resources

- What Is Agentic Coding? Understanding Autonomous AI in the Developer Workflow

- Agentic Coding vs. Vibe Coding: Comparing AI-Coding Paradigms for Developers

- Code Generation: From Traditional Tools to AI Assistants

- How to Refactor Legacy Code for AI Compatibility

- Does Using AI for Code Generation Actually Save Time?

|

|